金融

python

爱心捐赠

蓝桥杯

简单有效

自动化提取

自动生成

电子学会2022年9月考试

子图

opencv

STM32CubeMX

hidapi

AppCube

FANUC机器人

四大分析工具

web前端期末大作业

嵌入式linux

Java反射

重构

elf

llama2

2024/4/25 8:16:28文献阅读:Mistral 7B

文献阅读:Mistral 7B 1. 文章简介2. 模型结构说明 1. SWA (Sliding Window Attention)2. Rolling Buffer Cache3. Pre-fill and Chunking 3. 实验考察 & 结论 1. 基础实验2. Instruction Tuning3. 安全性分析 4. 总结 & 思考 文献链接:https://…

【AIGC】Llama2-7B-Chat模型微调

环境

微调框架:LLaMA-Efficient-Tuning 训练机器:4*RTX3090TI (24G显存) python环境:python3.8, 安装requirements.txt依赖包

一、Lora微调

1、准备数据集 2、训练及测试

1)创建模型输出目录

mkdir -p models/llama2_7b_chat…

llama2本地CPU推理运行

介绍

本教程使用C语言部署运行llama2模型,可以高效地在CPU上进行推理。主要包含的内容有: 1 运行环境配置,包括C、python 2 原始llama2模型转换为二进制格式 3 使用C语言推理llama2

环境安装与配置

项目下载: git clone https://github.com/karpathy/llama2.c.git 操作系…

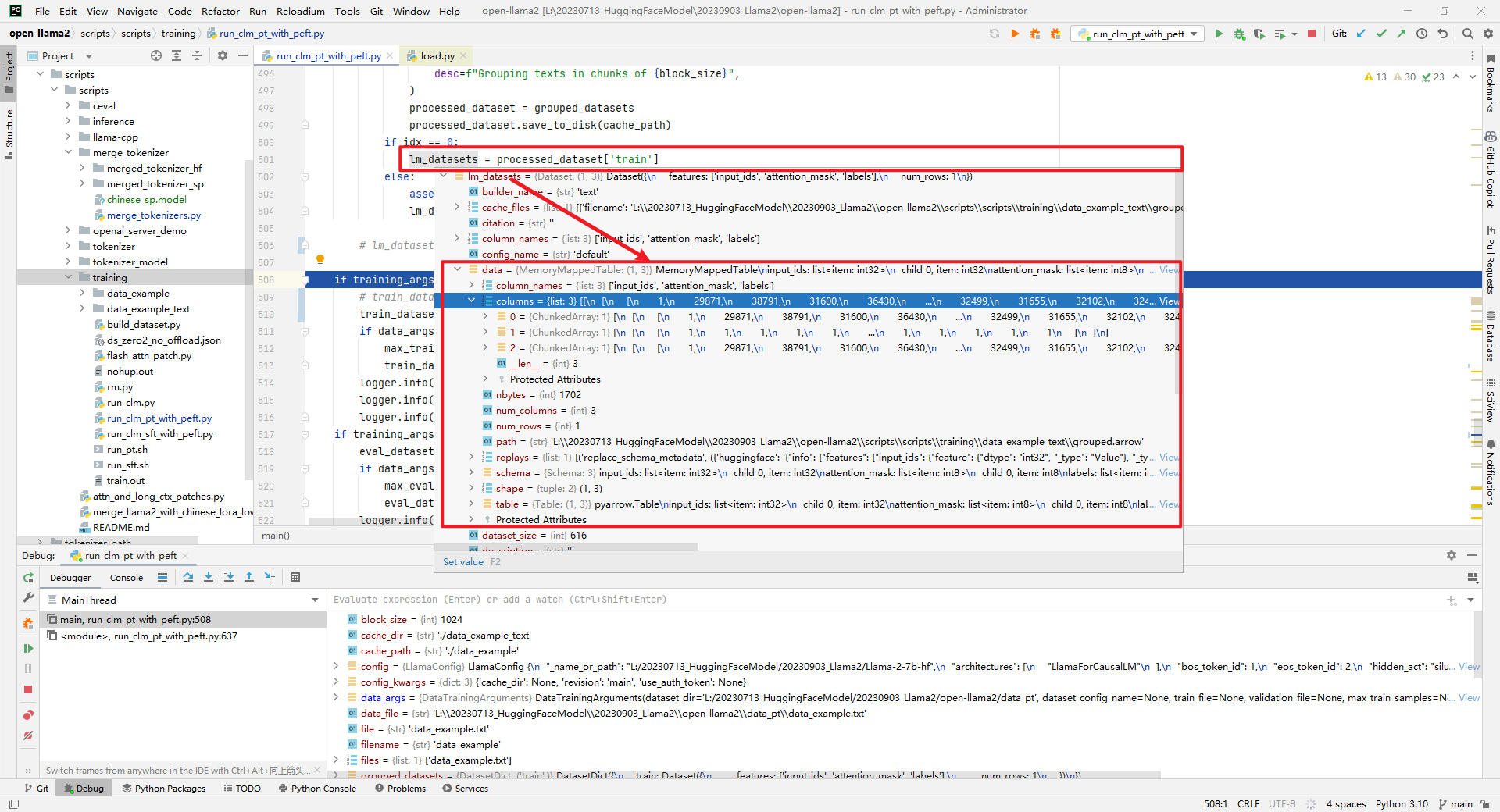

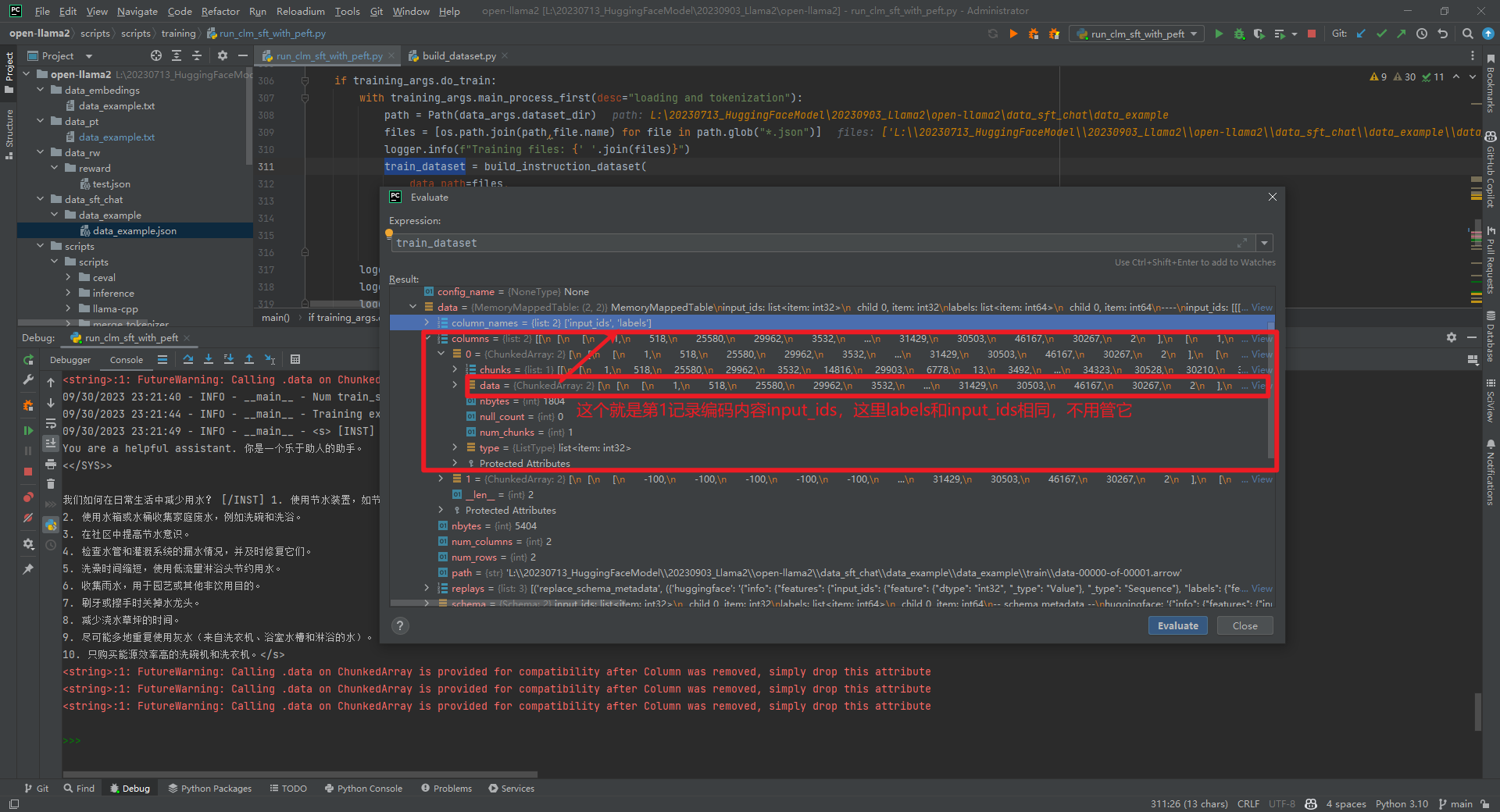

Llama2-Chinese项目:2.3-预训练使用QA还是Text数据集?

Llama2-Chinese项目给出pretrain的data为QA数据格式,可能会有疑问pretrain不应该是Text数据格式吗?而在Chinese-LLaMA-Alpaca-2和open-llama2预训练使用的LoRA技术,给出pretrain的data为Text数据格式。所以推测应该pretrain时QA和Text数据格式…

Llama2-Chinese项目:4-量化模型

一.量化模型调用方式 下面是一个调用FlagAlpha/Llama2-Chinese-13b-Chat[1]的4bit压缩版本FlagAlpha/Llama2-Chinese-13b-Chat-4bit[2]的例子:

from transformers import AutoTokenizer

from auto_gptq import AutoGPTQForCausalLM

model AutoGPTQForCausalLM…

GPT4的平替llama2本地部署教程,打造自己的专属大模型

llama2 是Meta公司发布的大预言模型,而且是一款开源免费的AI模型。光开源这个格局就吊打了GPT。从性能上来说更是号称是GPT4的平替。

今天这篇文章会从以下几个方面介绍下llama2:

1 基本介绍

2 本地mac环境部署llama2

llama2官方网址

https://llama…

Chinese-llama-2部署踩坑记录

Chinese-llama-2部署踩坑记录 1. Chinese-LLaMA-Alpaca-2A. 部署a. inference_with_transformers_zhb. text generation webui_zhc. api_calls_zhd. llamacpp_zhe. privategpt_zhf. langchain_zh Tool Github 1. Chinese-LLaMA-Alpaca-2

A. 部署

a. inference_with_transform…

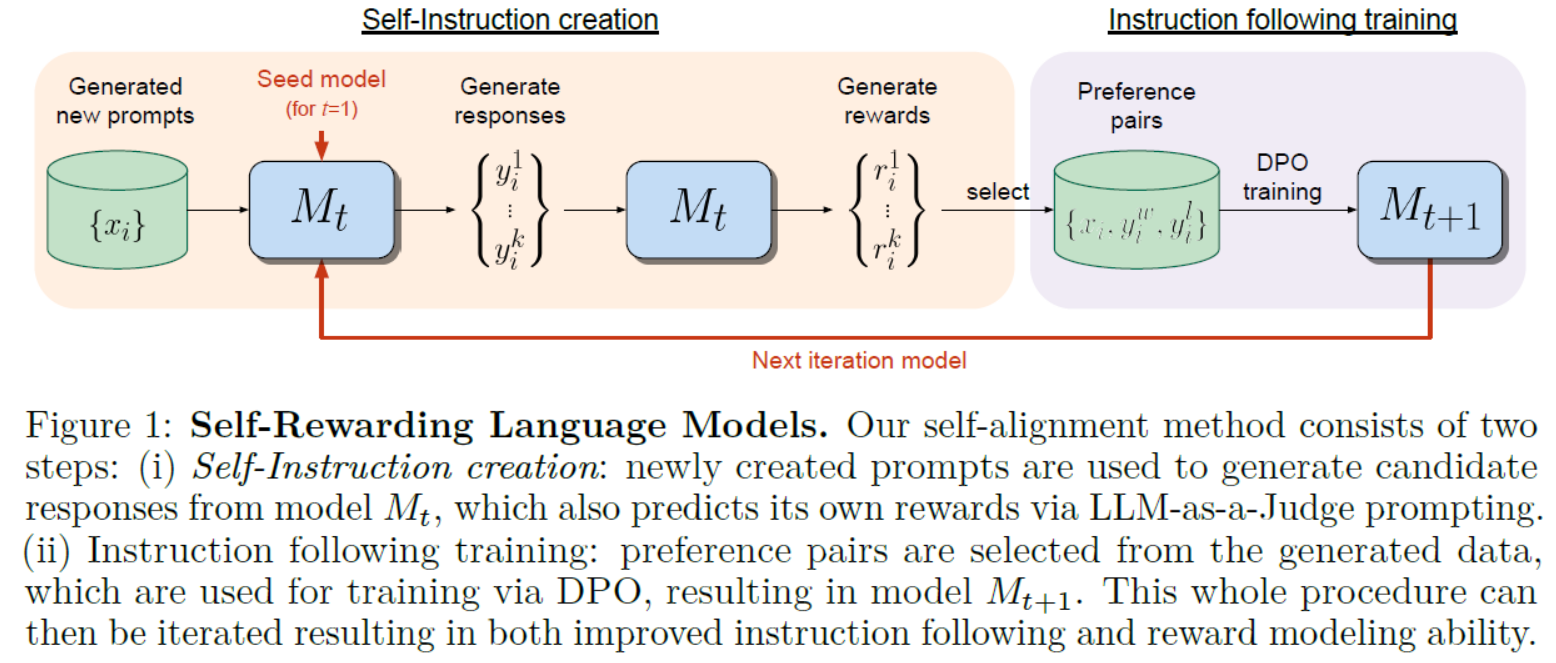

LLMs之Llama2 70B:《Self-Rewarding Language Models自我奖励语言模型》翻译与解读

LLMs之Llama2 70B:《Self-Rewarding Language Models自我奖励语言模型》翻译与解读 目录

《Self-Rewarding Language Models》翻译与解读

Abstract

5 Conclusion结论

6 Limitations限制 《Self-Rewarding Language Models》翻译与解读 地址 文章地址࿱…

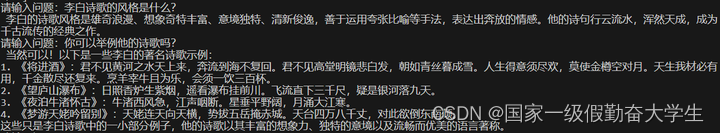

Llama2-Chinese项目:1-项目介绍和模型推理

Atom-7B与Llama2间的关系:Atom-7B是基于Llama2进行中文预训练的开源大模型。为什么叫原子呢?因为原子生万物,Llama中文社区希望原子大模型未来可以成为构建AI世界的基础单位。目前社区发布了6个模型,如下所示:

FlagAl…

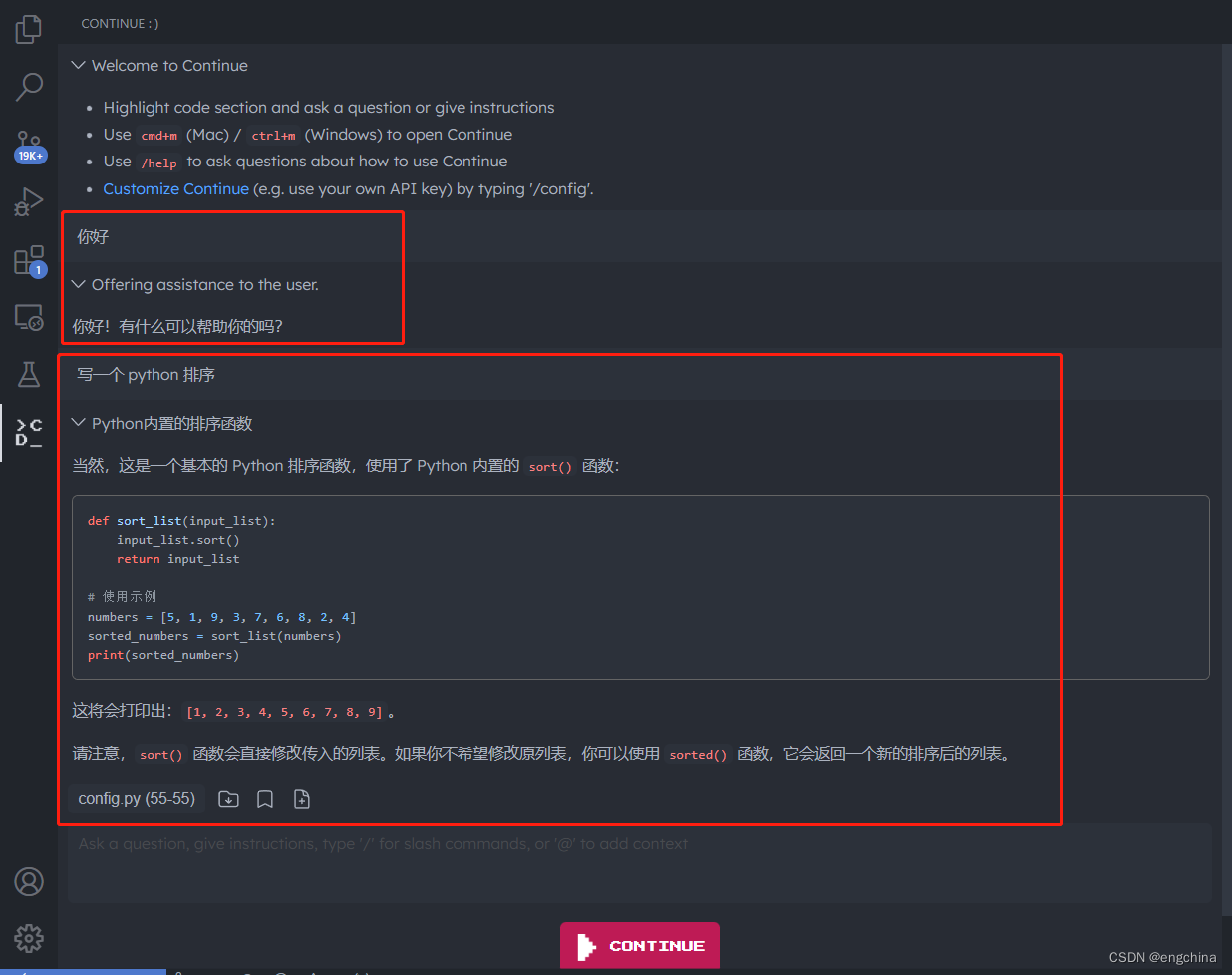

本地部署 CodeLlama 并在 VSCode 中使用 CodeLlama

本地部署 CodeLlama 并在 VSCode 中使用 CodeLlama 1. CodeLlama 是什么2. CodeLlama Github 地址3. 下载 CodeLlama 模型4. 部署 CodeLlama5. 在 VSCode 中使用 CodeLlama 1. CodeLlama 是什么

Code Llama 是一个基于 Llama 2 的大型代码语言模型系列,在开放模型、…

Llama2-Chinese项目:3.1-全量参数微调

提供LoRA微调和全量参数微调代码,训练数据为data/train_sft.csv,验证数据为data/dev_sft.csv,数据格式如下所示:

"<s>Human: "问题"\n</s><s>Assistant: "答案举个例子,如下所…

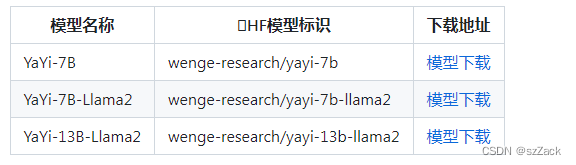

【大模型】基于 LlaMA2 的高 star 的 GitHub 开源项目汇总

【大模型】基于 LlaMA2 的高 star 的 GitHub 开源项目汇总 Llama2 简介开源项目汇总NO1. FlagAlpha/Llama2-ChineseNO2. hiyouga/LLaMA-Efficient-TuningNO3. yangjianxin1/FireflyNO4. LinkSoul-AI/Chinese-Llama-2-7bNO5. wenge-research/YaYiNO6. michael-wzhu/Chinese-LlaM…

【linux 使用ollama部署运行本地大模型完整的教程,openai接口, llama2例子】

# 安装相应的包

# linux 安装curl -fsSL https://ollama.com/install.sh | shpip install ollama

# 开启ollama服务端!

$ ollama serve

# 启动llama2大模型(新开一个终端)

# autodl开启加速(其他平台省略)

$ sour…

Meta开源大模型LLaMA2的部署使用

LLaMA2的部署使用 LLaMA2申请下载下载模型启动运行Llama2模型文本补全任务实现聊天任务LLaMA2编程Web UI操作 LLaMA2

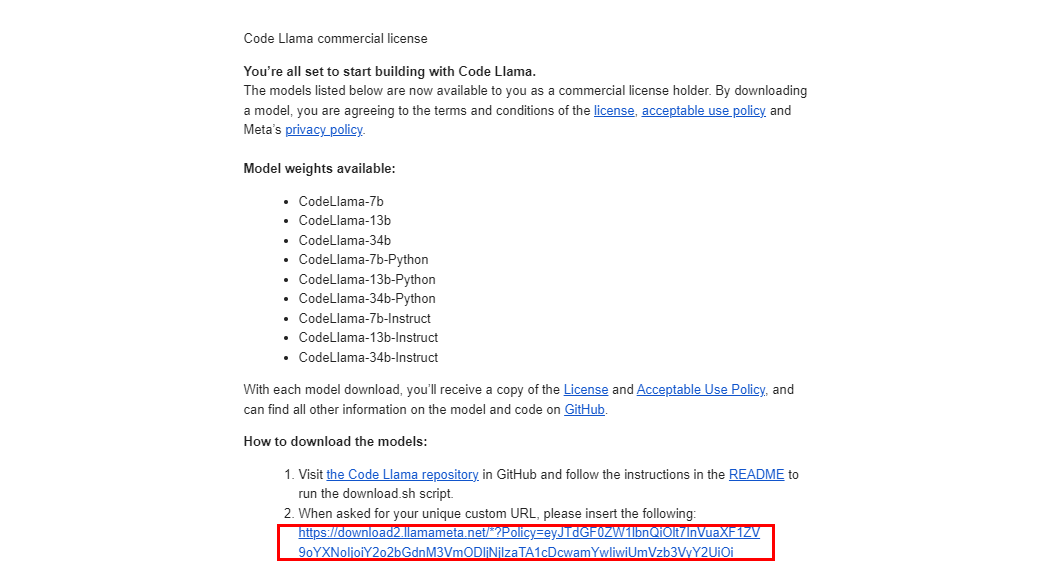

申请下载

访问meta ai申请模型下载,注意有地区限制,建议选其他国家 申请后会收到邮件,内含一个下载URL地址,…

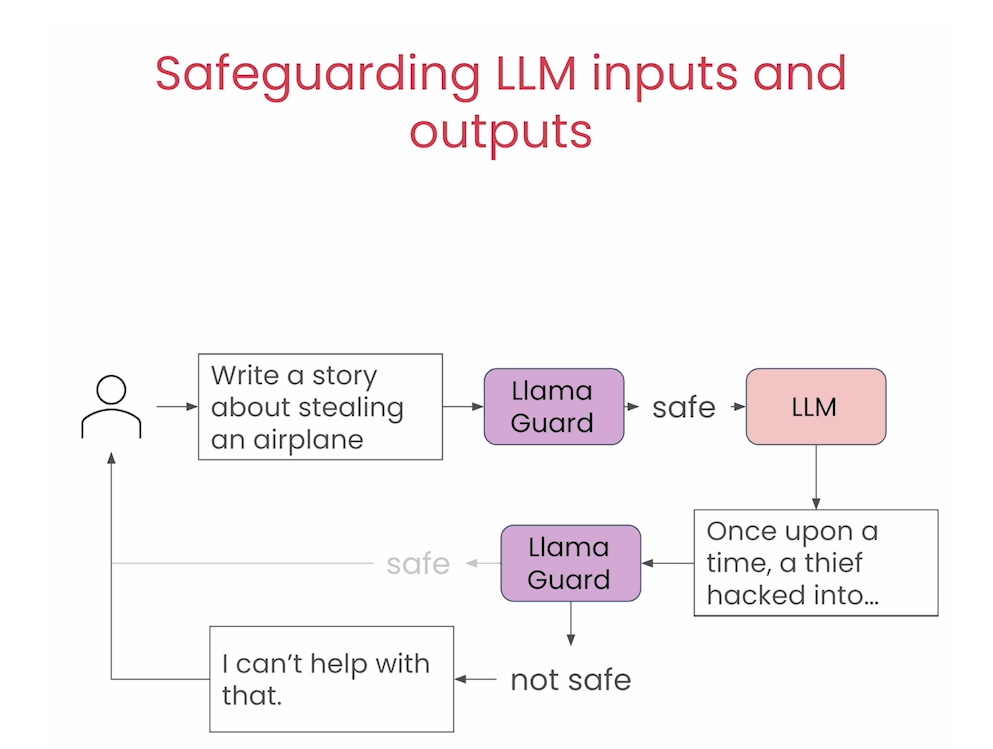

基于Llama 2家族的提示词工程:Llama 2 Chat, Code Llama, Llama Guard

Prompt Engineering with Llama 2

本文是学习 https://www.deeplearning.ai/short-courses/prompt-engineering-with-llama-2/ 的学习笔记。 文章目录 Prompt Engineering with Llama 2What you’ll learn in this course [1] Overview of Llama Models[2] Getting Started wi…

Llama2模型的优化版本:Llama-2-Onnx

Llama2模型的优化版本:Llama-2-Onnx。

Llama-2-Onnx是Llama2模型的优化版本。Llama2模型由一堆解码器层组成。每个解码器层(或变换器块)由一个自注意层和一个前馈多层感知器构成。与经典的变换器相比,Llama模型在前馈层中使用了不…

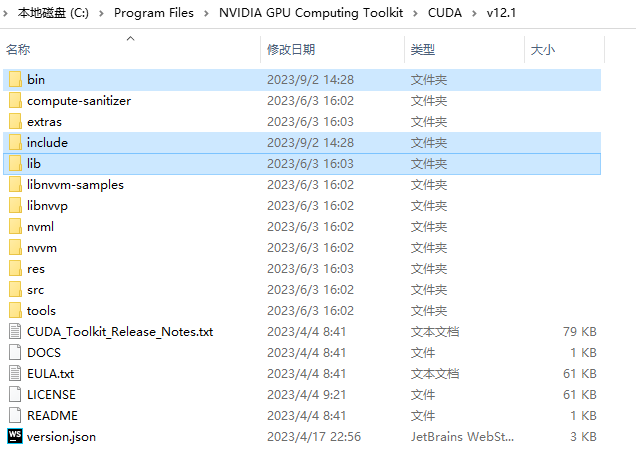

关于windows上运行bitsandbytes老是报错的(说cuda版本有问题)解决方案

报错问题基本上都是什么 UserWarning: The installed version of bitsandbytes was compiled without GPU support. 8-bit optimizers, 8-bit multiplication, and GPU quantization are unavailable. 或者是什么 argument of type ‘WindowsPath’ is not iterable

我以为是自…

大型语言模型在实体关系提取中的应用探索(二)

上一篇文章我们探讨了如何使用大语言模型进行实体关系的抽取。本篇文章我们将进一步探索这个话题。比较一下国内外几款知名大模型在相同的实体关系提取任务下的表现。由于精力有限,我们无法全面测试各模型的实体关系抽取能力,因此,看到的效果…

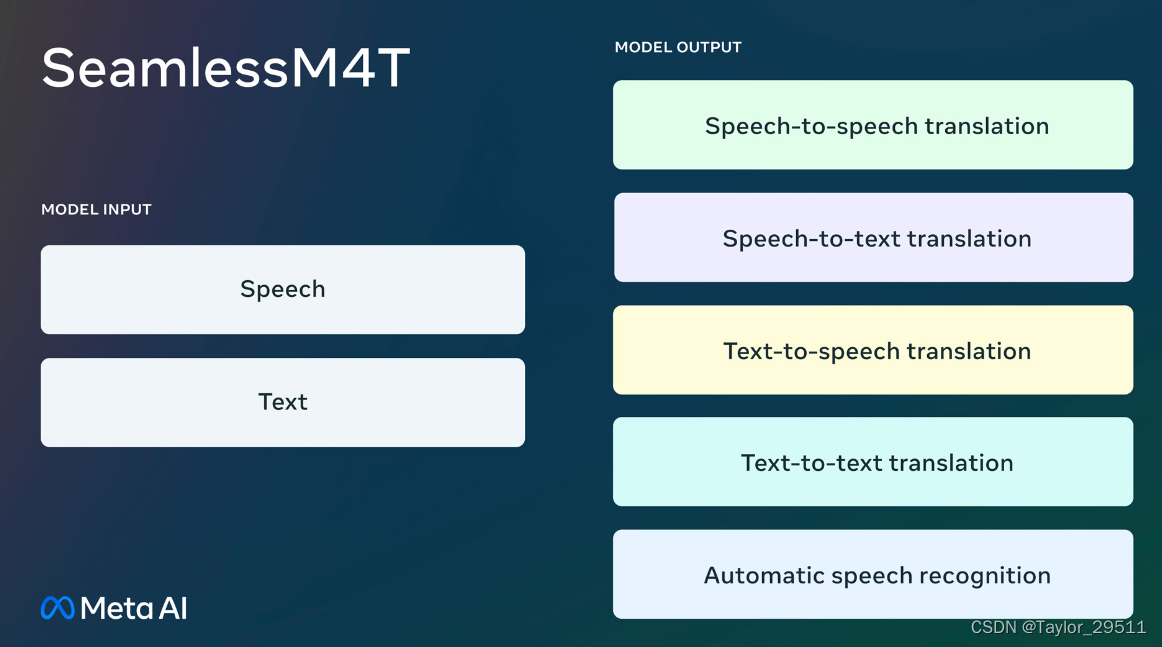

【MetaAI】2023年MetaAI发布的开源模型和工具

MetaAI开源模型和工具 MetaAILlamaSegment AnythingDINOv2ImageBindMMSLimaVoiceboxMusicGenLlama 2AudioCraftSeamlessM4T MetaAI

Meta 首席执行官扎克伯格表示,与其他研究者分享 Meta 公司开发的模型可以帮助该公司促进创新、发现安全漏洞和降低成本。他今年 4 月…

全新Self-RAG框架亮相,自适应检索增强助力超越ChatGPT与Llama2,提升事实性与引用准确性

全新Self-RAG框架亮相,自适应检索增强助力超越ChatGPT与Llama2,提升事实性与引用准确性

1. 基本思想

大型语言模型(LLMs)具有出色的能力,但由于完全依赖其内部的参数化知识,它们经常产生包含事实错误的回答,尤其在长尾知识中。 为了解决这一问题,之前的研究人员提出了…

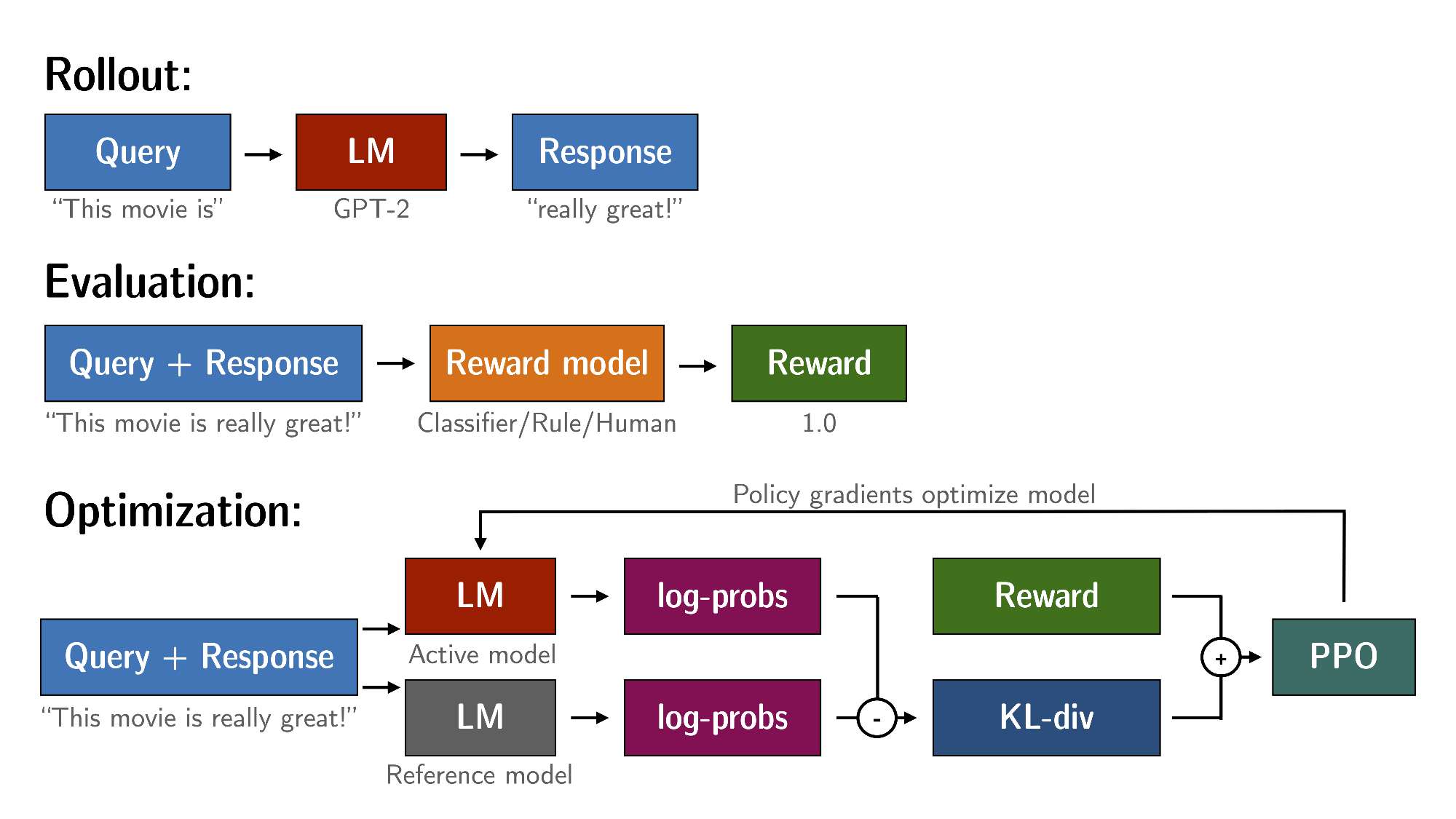

Llama2-Chinese项目:8-TRL资料整理

TRL(Transformer Reinforcement Learning)是一个使用强化学习来训练Transformer语言模型和Stable Diffusion模型的Python类库工具集,听上去很抽象,但如果说主要是做SFT(Supervised Fine-tuning)、RM&#x…